Okay, Deep Breath: Another AI 'What Now?' Moment

Okay, deep breath. Another Monday, another piece of tech news that makes you just… tilt your head a little. You know those moments? Like when you hear about AI writing symphonies, or passing bar exams, or, heck, even generating perfectly passable pizza recipes. Wild stuff. But then you stumble across something like this headline about West Virginia, and suddenly, my slightly tired tech writer brain goes, "Wait, what?"

We're talking about an AI candidate, folks. In West Virginia. As in, an artificial intelligence, a collection of algorithms and data, potentially running for public office. Hoppy Kercheval, a name many in WV will recognize, recently shared his take on this. An opinion piece, mind you, and a pretty thought-provoking one at that. He's asking the questions, and frankly, I'm right there with him, trying to untangle this digital knot.

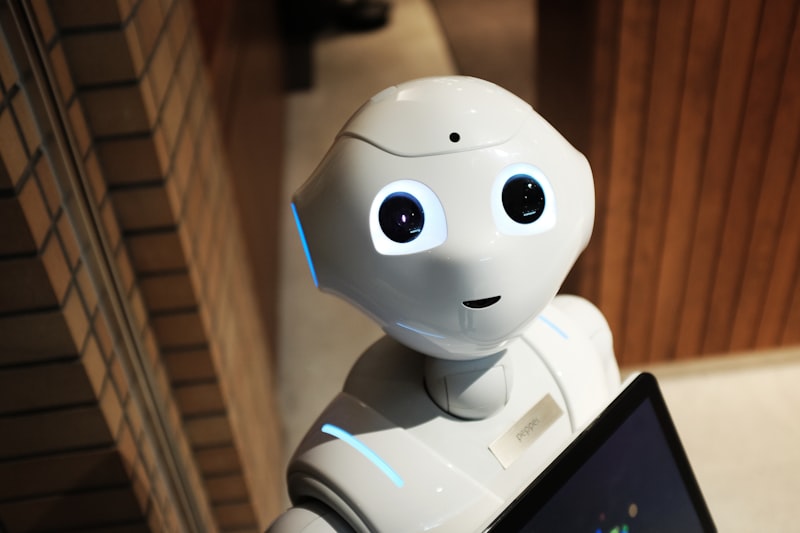

First off, let’s get into what an "AI candidate" actually is. Because, let’s be honest, it conjures up all sorts of images, right? Is it a robot in a suit? A disembodied voice in a campaign office? Or maybe, just maybe, it’s a sophisticated chatbot, a hyper-advanced language model fed every policy paper, every local newspaper article, every constituent email from the last twenty years. My bet's on the latter, a kind of digital political oracle designed to generate responses, policy stances, and campaign promises based on vast datasets. It’s not quite HAL 9000, but it’s definitely not your grandpa’s local councilman either. Or is it? That’s the thing, it’s all so new, so… undefined.

Think about it. We’ve seen AI make incredible strides in mimicking human conversation, generating images, even writing entire scripts. It’s all about pattern recognition, really. Take enough input, understand the relationships, and then predict or generate relevant output. So, for a political candidate, you’d train it on everything from legislative text to public sentiment data, economic indicators, environmental reports – the works. The idea, presumably, is to have a candidate that is always "on," always informed, and theoretically, always rational. No bad hair days, no gaffes caught on hot mic, no questionable tweets from 2008 coming back to haunt them. Sounds… efficient, doesn't it?

But efficiency isn't everything, is it? Politics, at its core, is a human endeavor. It’s about empathy, about understanding the nuanced struggles of real people, about compromise and charisma. Can an algorithm truly grasp the despair of a struggling single parent trying to make ends meet in a small Appalachian town? Can it feel the frustration of a small business owner battling endless red tape? I mean, it can process the data points related to those situations, sure. It can churn out a policy proposal designed to address them. But can it feel them? Can it inspire hope or build genuine community? That’s a whole different ballgame.

The Promise and Peril of Algorithmic Governance

The proponents of such a system, and Hoppy Kercheval’s piece certainly touches on this, might argue that an AI candidate brings unparalleled objectivity. Imagine a politician free from personal biases, from the need to secure donations, from the influence of powerful lobbyists. An AI, in theory, would just look at the data, identify the optimal solution, and propose it. Pure, unadulterated logic applied to governance. Sounds almost utopian, doesn't it? A dream for anyone fed up with gridlock and partisan bickering.

Plus, accessibility! An AI candidate could potentially engage with every single constituent, 24/7. No more waiting for town halls, no more brief, rushed interactions. You could, in theory, chat with your AI representative at 3 AM about that pothole on your street, or your concerns about school funding. It could provide instant, data-backed answers, perhaps even customized to your specific situation. That's a powerful tool for direct democracy, a way to truly democratize access to political dialogue. It’s the ultimate "representative" in some ways, always listening, always processing.

But then, my tired brain kicks in with the "hold on a minute" thoughts. Objectivity? Whose objectivity? AI models are trained on human-generated data. And human data, well, it’s messy. It’s full of our biases, our historical injustices, our societal quirks. If you feed an AI a diet of past political decisions, it might just learn to perpetuate the status quo, even if the status quo is inherently unfair or inefficient. There’s no magic switch for "unbiased." It's an ideal, not a default setting. Actually, that's not quite right – it's an ideal that requires immense, painstaking effort to even approach, let alone achieve. And even then, it's always a moving target.

And accountability? This is a big one. Who's responsible if the AI candidate makes a decision that has unforeseen, negative consequences? The programmers? The data scientists? The citizen who voted for it? It’s a classic "black box" problem, really. We might see the output, the policy recommendation, but understanding the complex layers of algorithms that led to it? That’s often opaque, even to its creators. That opacity in government? Not exactly a recipe for trust, is it? We struggle enough with human accountability in politics; imagine trying to hold a non-human entity to account. It’s a philosophical minefield, if nothing else.

The Human Element: Can AI Inspire?

I remember this one time, during a particularly frustrating local election, I went to a small town hall meeting. The candidate, a slightly disheveled but passionate woman, spoke for maybe ten minutes. She wasn't slick, didn't have all the answers perfectly polished. But she talked about her kids, about her neighbor's struggling farm, about a community project she’d helped get off the ground. And you know what? People listened. They asked tough questions, but they also nodded. They felt a connection. She wasn't just a platform; she was a person. That human touch, that ability to connect on an emotional level, to inspire, to rally people around a cause – can an AI do that? Truly?

I mean, an AI could deliver a perfectly crafted speech, optimized for emotional impact based on sentiment analysis. It could mimic human cadence, tone, even facial expressions (if it had an avatar). But is that genuine? Or is it just a very convincing performance? There's a difference between processing emotions and having them. And in leadership, I think that difference matters. A lot. We elect leaders not just for their intellect, but for their character, their resilience, their ability to lead us through crises with a steady hand and, yes, a human heart. This AI candidate, it just... doesn't have a heart. Or a hand. You get my drift.

The very idea of an AI candidate also raises questions about the future of political engagement. If our representatives are algorithms, what does that mean for our own role as active citizens? Does it make us more engaged because we can interact constantly? Or does it make us more passive, simply delegating the hard work of governance to a machine? It's a fundamental shift in how we might view democracy itself. Is democracy about representation of human will, human needs, human values? Or is it about optimal decision-making, regardless of the decision-maker's sentience?

So, Where Do We Go From Here?

This West Virginia development, highlighted by Hoppy Kercheval, isn't just a quirky news item. It’s a canary in the coal mine, a signal that the boundaries of AI's societal integration are being pushed, rapidly. This isn't theoretical anymore; it's happening. And while it offers intriguing possibilities for efficiency and data-driven policy, it also opens up a Pandora's box of ethical, philosophical, and practical challenges that we, as a society, are barely equipped to handle.

We need to have serious conversations about the guardrails, about transparency, about accountability. Who decides what data an AI candidate is trained on? Who audits its decision-making process? What happens when an AI candidate's logic clashes with deeply held human values? These aren't just technical questions; they're questions about the very fabric of our society and how we choose to govern ourselves. It's not just about what AI can do, but what it should do, and what we allow it to do.

Honestly, thinking about an AI politician makes me feel even more tired than usual. It’s a fascinating concept, absolutely. But also… a little unsettling. A lot unsettling, actually. Because while I appreciate a good algorithm as much as the next tech enthusiast, there are some things, some very human things, that I just don't think can be coded. Not yet, anyway. Maybe never.

So, is this the future? A future where elections are less about personality and more about processing power? Where charisma is replaced by optimized rhetoric? I don't know. But I do know it makes you think. A lot.

🚀 Tech Discussion:

What do you think? If an AI candidate could genuinely process all the data and make 'optimal' decisions, would you vote for it? Or is the human element simply irreplaceable in governance?

Generated by TechPulse AI Engine